If you're in marketing or SEO, you've probably seen the same question come up more and more: should we be investing in AI visibility right now?

It's a fair question. ChatGPT, Google AI Overviews, and Perplexity have moved fast and the advice on what to do about it hasn't always kept up.

So instead of adding another opinion, we went to the data. This article is based on Promptwatch’s ongoing analysis of over 1 billion citations across ChatGPT, Google AI Overviews, Google AI Mode, Perplexity, Gemini, and Claude.

What the data shows is not just that AI search is growing. It shows something more specific: in most categories, AI answers already have clear winners and most brands aren't among them.

And that gap is what makes the investment question more urgent than the noise suggests.

AI Search Is Already Sending Traffic (With or Without You)

Whether your buyers search on Google, open ChatGPT, or use Perplexity to help them make a decision, they're getting an AI answer.

Google AI Overviews now appear at the top of search results for a growing share of queries, changing what users see before they ever scroll. ChatGPT has reached 900 million weekly active users as of early 2026. Perplexity is growing fast as a standalone research tool, particularly among professional audiences.

These platforms are no longer something buyers try occasionally. They're part of how people research and make decisions every day.

And they work differently from traditional search in one way that matters for marketing teams.

In traditional search, a user types a query and gets a list of results to explore. In an AI answer, the platform synthesises a response, names specific brands, and presents it as a recommendation

If your brand isn't cited in that response, you don't exist in that moment. There is no page two.

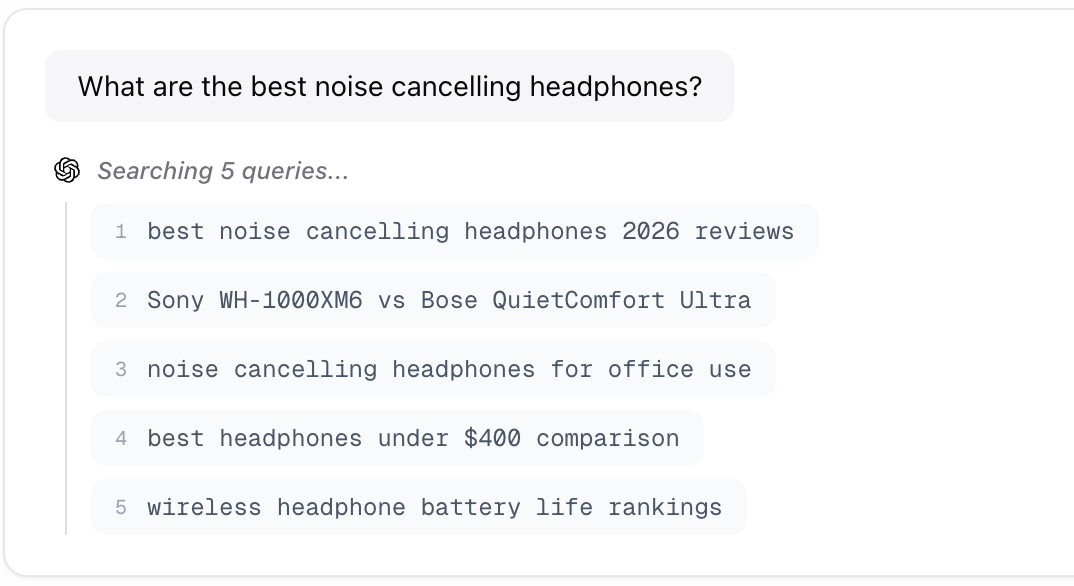

There's another layer to this that most teams don't account for. When someone types a single query into ChatGPT, the platform doesn't just answer that one question. It expands it into multiple sub-queries and searches each one separately before building its response.

A single query like "what are the best noise cancelling headphones" triggers five separate searches simultaneously, each one a different angle of the question, each one citing its own set of brands.

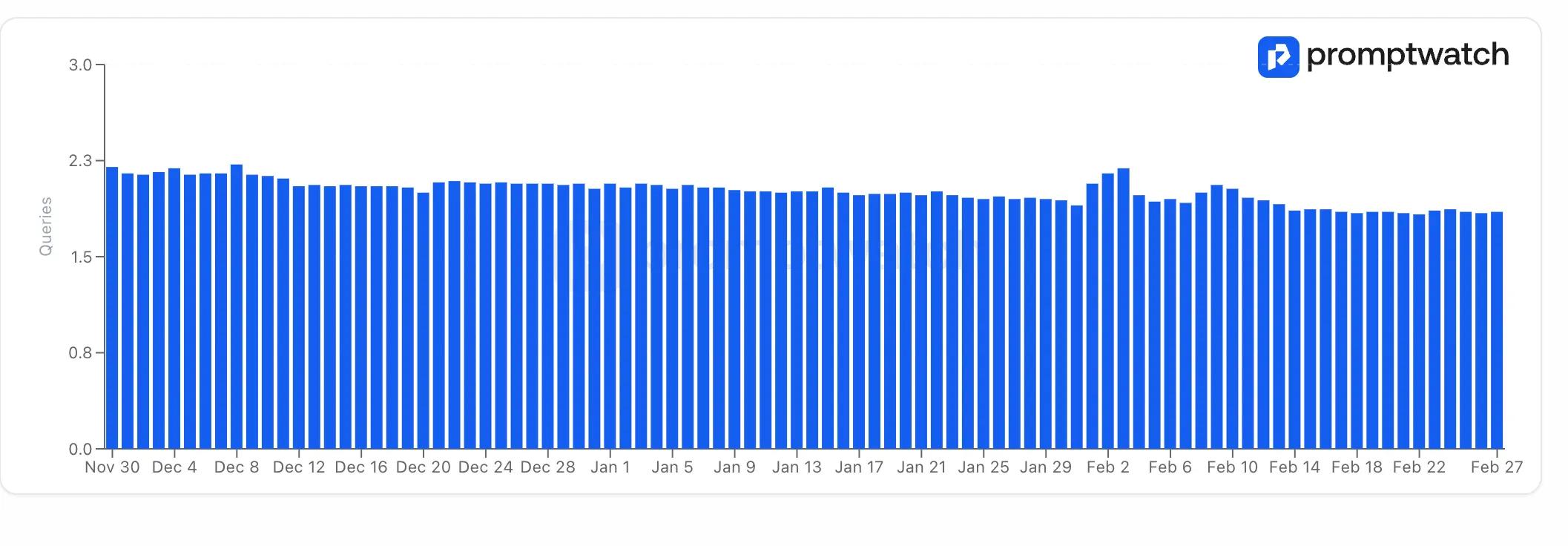

And this isn't an edge case. Our ChatGPT query fan-out data shows the platform consistently running between 1.7 and 2.3 sub-queries per response, every day, across every category we track.

It's not one answer your competitor is getting instead of you. It's multiple, every time a buyer searches in your category, across every platform they use, every day.

Think about what that means for visibility. If ChatGPT runs 2 sub-queries per response on average, and your brand appears in none of them, a competitor is filling both slots.

Multiply that across the dozens of category queries your buyers run every week (across ChatGPT, Perplexity, and Google AI Overviews) and the visibility gap compounds fast.

AI Visibility Already Has Winners and the Gap Is Growing

Citation slots are shrinking with every model update

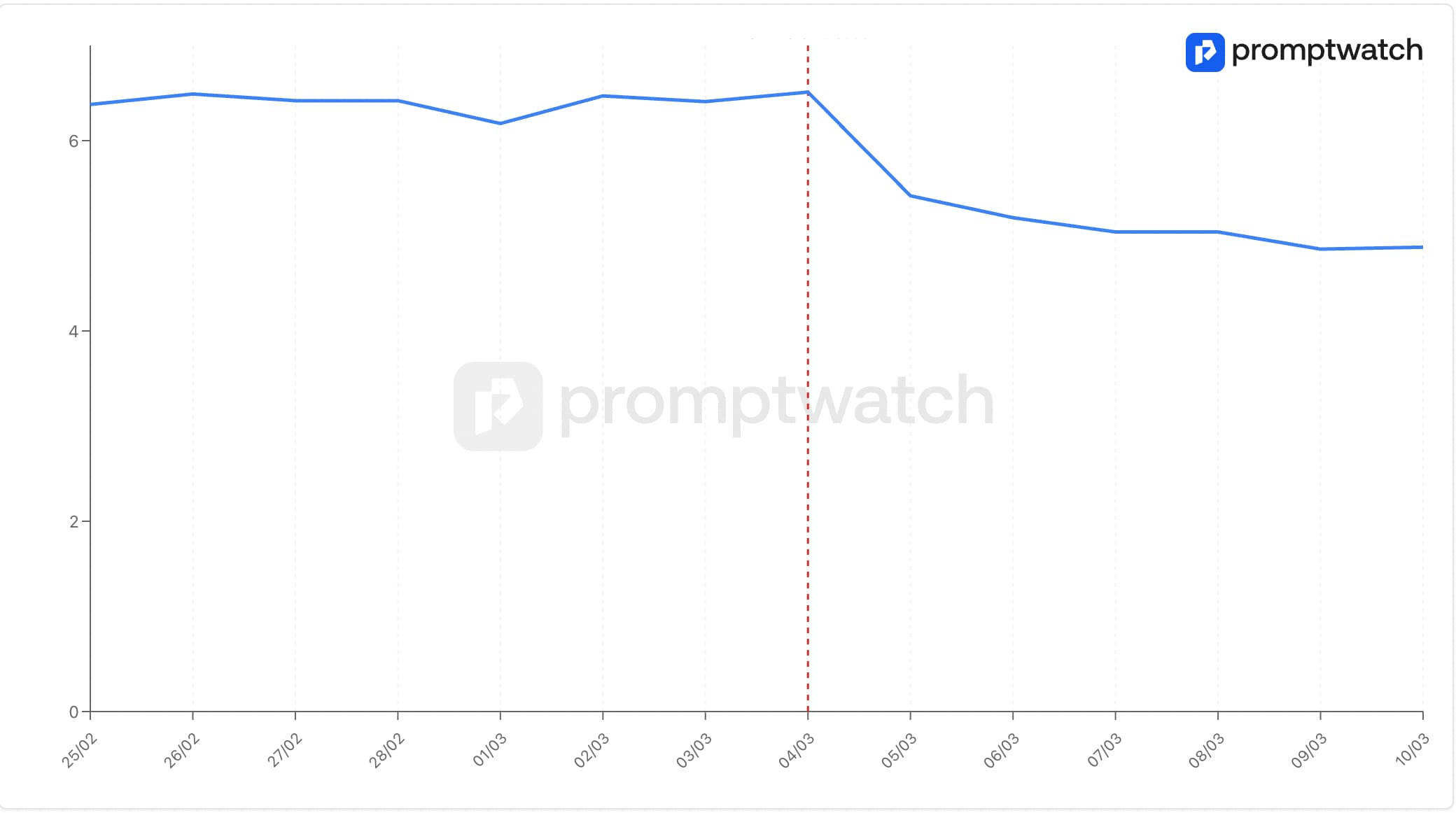

Something changed in March 2026 that most marketing teams haven't noticed yet.

Around the GPT-5.3 rollout on March 4, the average number of sources cited per ChatGPT response dropped noticeably, across all models, not just one. ChatGPT's citation behaviour changed as a whole.

When ChatGPT triggers a web search, it now typically cites between 4 and 6 sources per response. Perplexity cites more, usually between 6 and 10. Google AI Overviews varies by query type.

Think about what that means for any given query in your category. A handful of brands get cited. Everyone else is invisible. Not ranked lower, not on page two, just absent.

The brands currently in those slots aren't there by accident. They got there by building citation presence before the competition intensified.

The window to do the same is still open, but our data shows it's getting narrower with every model update.

Google AI Overviews and ChatGPT don't cite in the same way

Fewer citation slots means the competition is platform-specific and ChatGPT and Google AI Overviews don't play by the same rules.

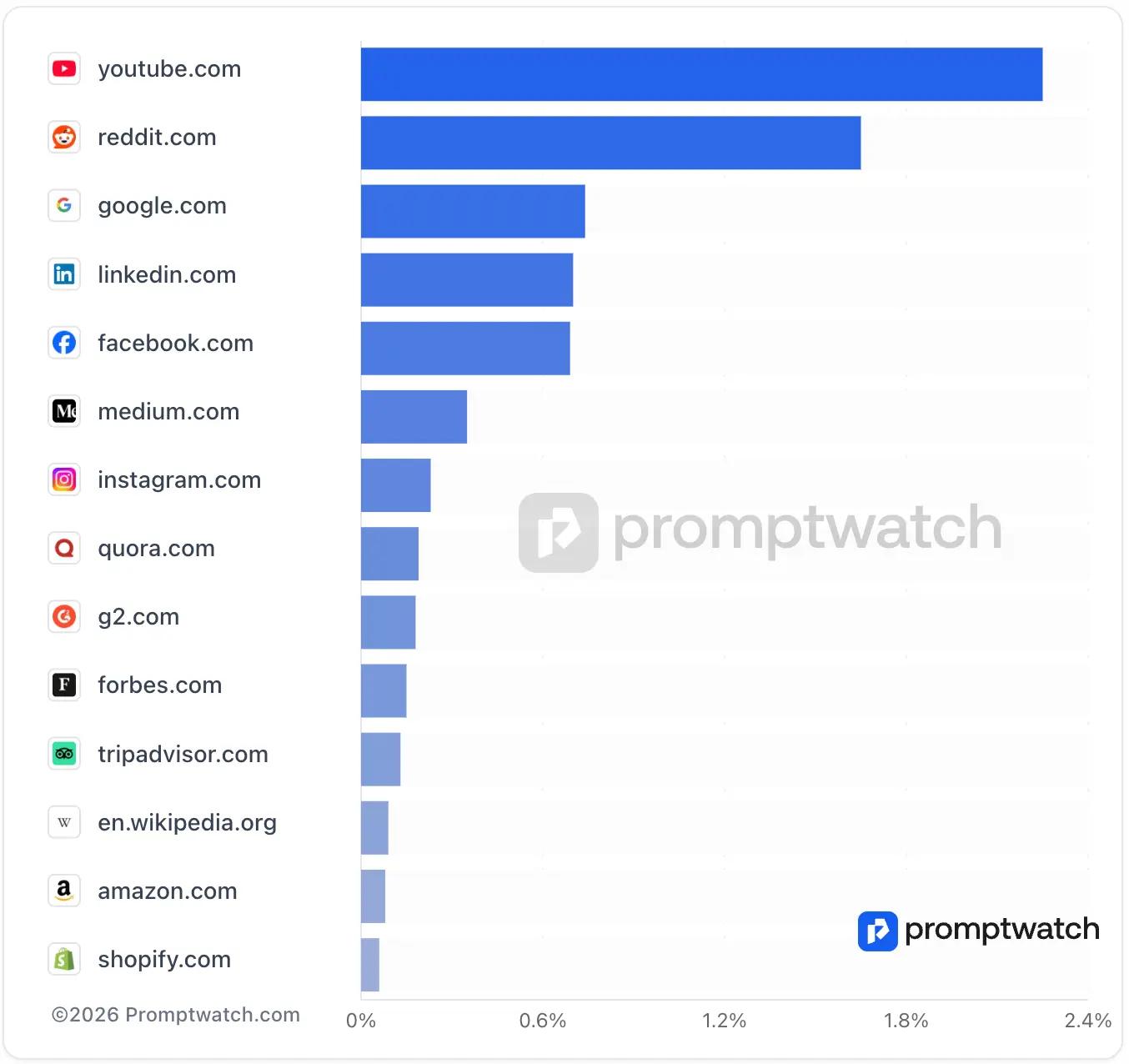

Our Google AI Overviews citation data from February 2026 and Google AI Mode citation data show the same dominant domains at the top of both Google surfaces: YouTube, Reddit, LinkedIn, Google, Facebook.

Certain domains have built a level of structural trust that carries across AI systems, not just within one platform.

But the content format split between ChatGPT and Google AI Overviews is where things get platform-specific. Google AI Overviews cites listicle content at 27.4% compared to ChatGPT's 17.3%.

Landing pages go the other way: 16.9% in ChatGPT, just 5.7% in AI Overviews. A landing page earning consistent citations in ChatGPT may barely register in AI Overviews at all.

This matters for the investment question because it shows the channel has specific, learnable rules. AI citation follows patterns that are consistent and measurable, which means brands that understand those ones can compete for citation slots.

Reddit and YouTube are the most cited social platforms across every AI platform we track

This is the finding that surprises most marketing teams when they see it for the first time.

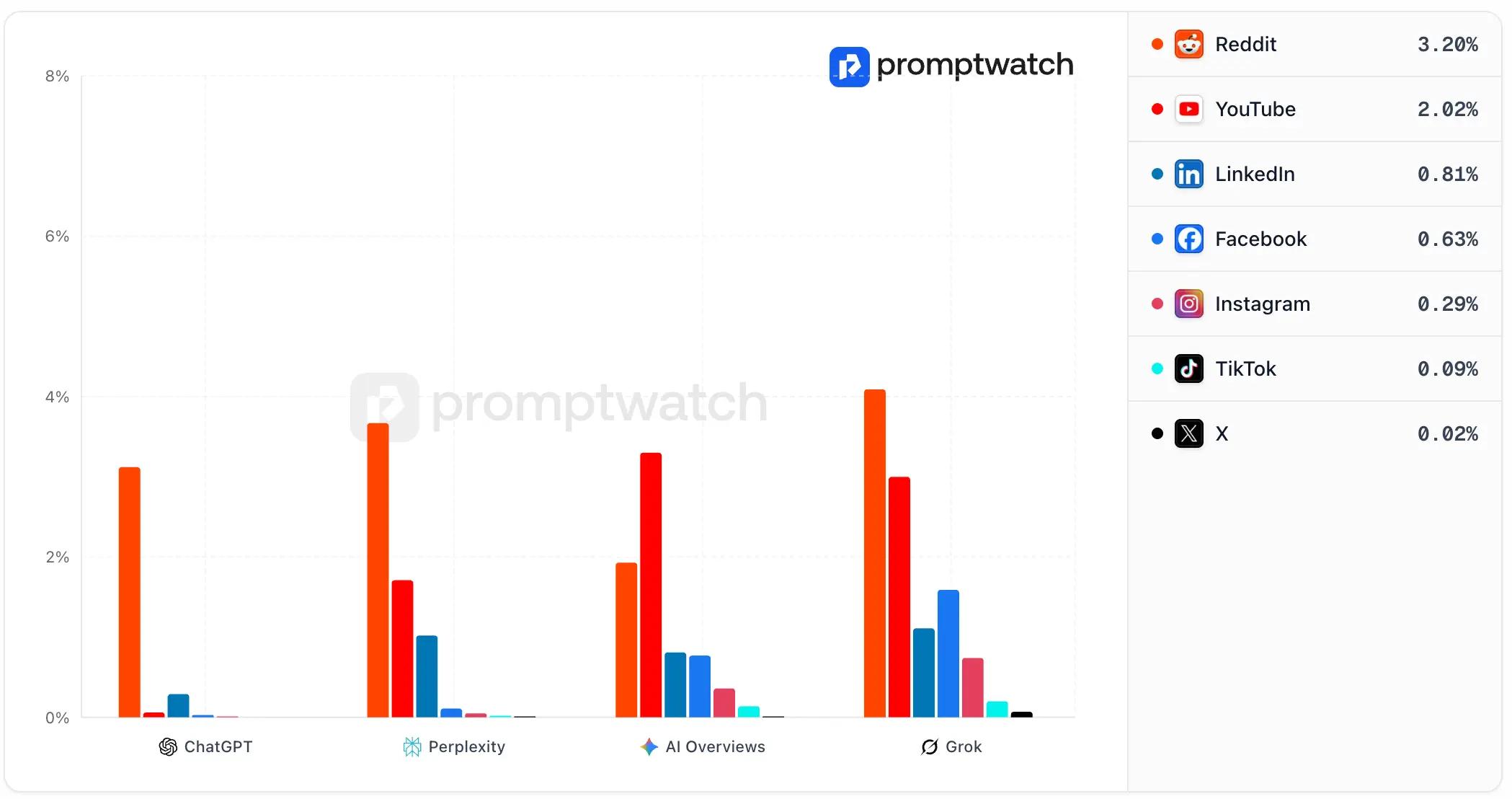

Our social media citation data tracks citation frequency across ChatGPT, Perplexity, Google AI Overviews, and Grok simultaneously.

Reddit accounts for 3.20% of ChatGPT citations. YouTube follows at 2.02%. LinkedIn at 0.81%. Facebook at 0.63%. The gap between Reddit and every other social platform is not close and it holds across every AI system we track, not just ChatGPT.

The kind of posts that get cited are not what most teams expect. 60% of cited Reddit posts had only 0 to 10 upvotes.

Posts that are 6 months or older have the highest citation rates. You don't need viral content, but you need genuine, specific, experience-based answers in the right communities.

Our February 2026 YouTube citation data puts YouTube at 1.7–1.9% of ChatGPT citations, the #2 cited social platform. YouTube also holds the top position in both Google AI Mode and Google AI Overviews domain rankings.

Subscriber count is not the primary citation factor. A focused channel with 10,000 subscribers publishing specific tutorials will consistently outperform a broad channel with 1 million subscribers.

Both platforms perform well for the same reason: the content they contain maps directly to how buyers phrase questions to AI. A Reddit thread where founders compare their real experience with different tools is more useful to an AI answering a comparison query than a polished brand blog post making the same case.

Google signed a formal data partnership with Reddit for exactly this reason: community content has become structurally important to how AI assigns trust.

Taken together, these data points to one thing: AI visibility is already a competitive channel with some brands winning more visibility than others. The brands appearing consistently in these answers aren't waiting to see how AI search develops.

They're already there. And with citation slots shrinking after every model update, the cost of waiting is getting higher, not lower.

Promptwatch Makes Your AI Visibility Measurable

One of the biggest challenges with AI visibility is that it's largely invisible by default. You know AI platforms are influencing how your buyers discover products. But most teams have no clear way to see what that looks like for their brand specifically, which prompts mention them, which don't, and why.

This is the gap Promptwatch is built to close. It tracks your brand's presence at the prompt level across ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews. It shows you whether you appear in AI answers, why, and what's standing in the way.

Here's what that looks like in practice:

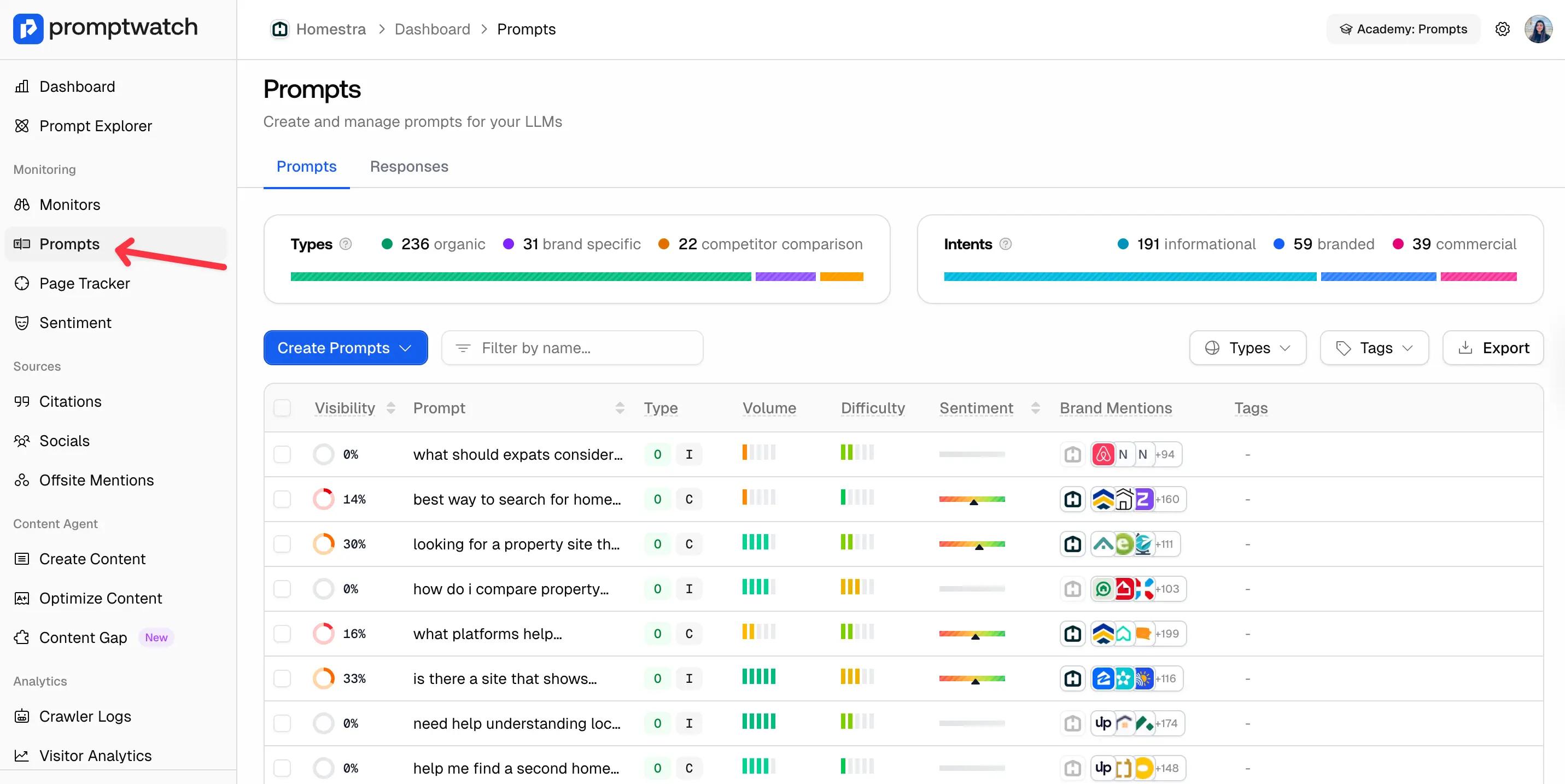

Prompt Tracking: See Exactly Where You Appear and Where You Don't

The starting point for any AI visibility strategy is knowing which prompts mention your brand and which ones hand the answer to a competitor.

Promptwatch tracks this at the prompt level, showing you visibility scores, search volume, difficulty, across ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews.

For each prompt, you can see the full response, which brands appear, which sources get cited, and whether your brand is present, absent, or misrepresented.

When you first set up tracking, start with around 30 prompts. That gives you a solid foundation across different intent types: informational, comparison, branded, transactional.

From there, you can track your overall visibility score over time and see exactly which prompts are costing you the most ground right now.

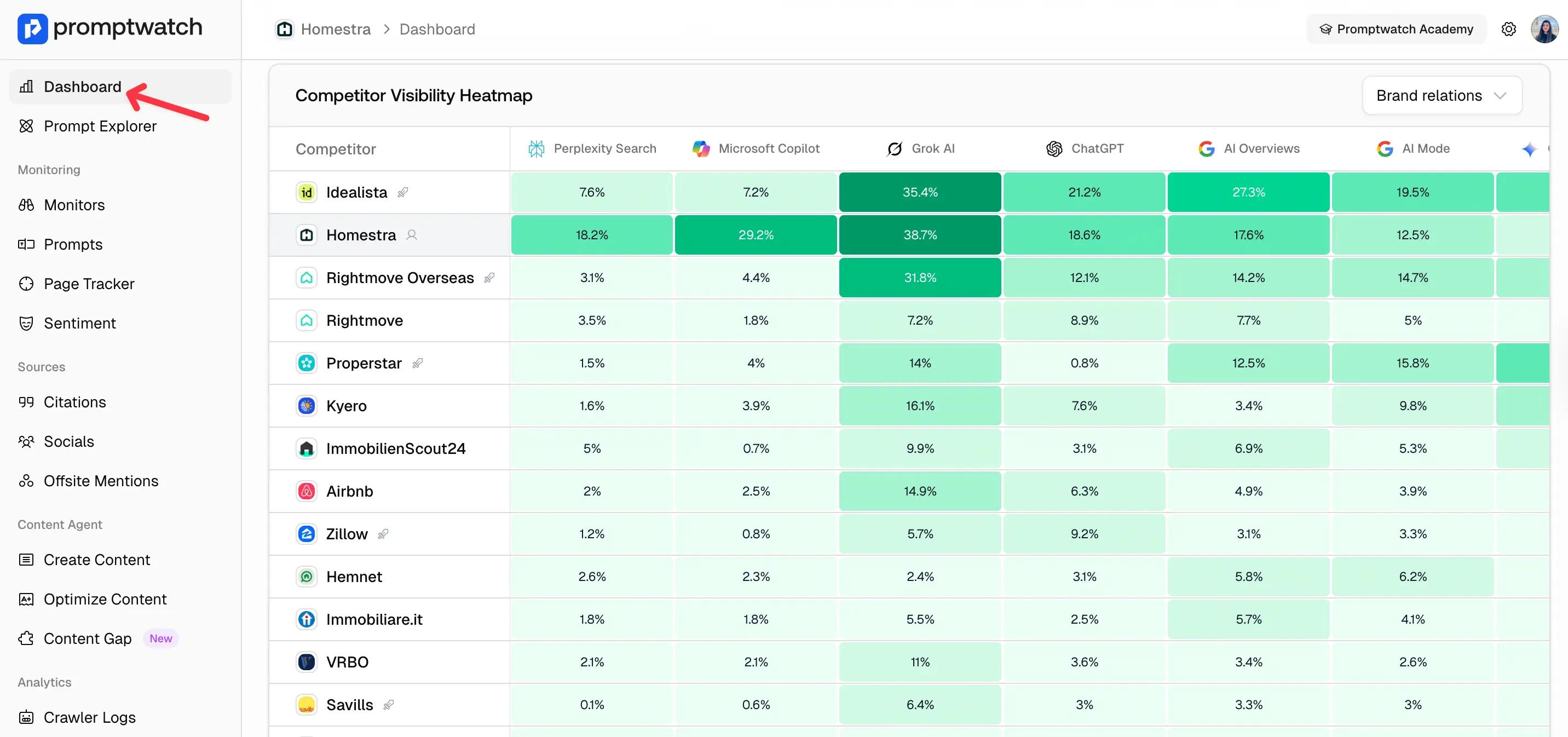

Competitor Visibility Heatmap: Know Exactly Where You're Losing Ground

Knowing your own citation numbers is useful. Knowing how they compare to your competitors is what actually drives decisions.

The Competitor Visibility Heatmap shows your citation share against named competitors across ChatGPT, Perplexity, Copilot, Grok, and Google AI Overviews (all in one view).

What the heatmap makes immediately visible is where your brand is strong and where it's exposed.

A platform where three competitors consistently outrank you, but your content strategy hasn't accounted for that, that's a decision waiting to be made.

You might be performing well on ChatGPT but nearly invisible on Perplexity and that gap matters depending on who your buyers are and where they search.

The heatmap shows you that split in one view, across every competitor you want to track, updated in real time.

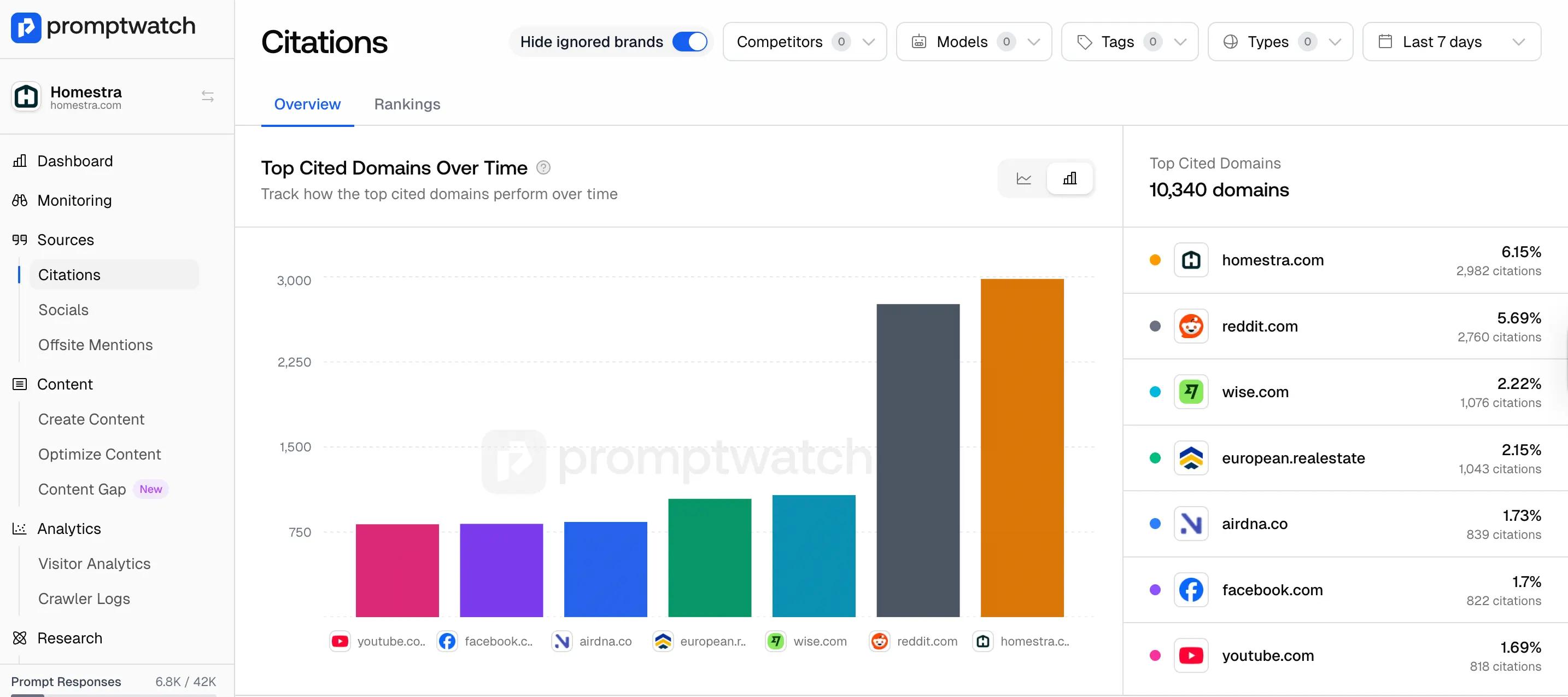

Citation Analysis: Understand Which Sources AI Trusts in Your Category

Prompt tracking tells you whether you appear. Citation Analysis tells you why you don't and who is winning those citations instead.

It shows the exact URLs being cited in your category, what type of source they are (corporate landing page, news article, social post), their traffic, their position, and how that position is changing over time.

If a competitor's landing page has been sitting at position 2 in AI citations for the past few months and yours isn't appearing at all, that's not a guess, it's a specific page you can analyze, learn from, and compete with.

Citation Analysis gives you the exact URL, the source type, the traffic it's getting, and how long it's been there.

It also shows you something most teams miss: it's not just corporate pages winning citations. News articles and social posts appear alongside landing pages in the same citation pool. Understanding that mix (and where your brand is absent from it) is what turns citation data into a content strategy.

Crawler Logs: Find Out If AI Can Actually Read Your Pages

Most AI visibility tools show you what's happening in AI answers. Very few can tell you what's happening before that, whether AI crawlers are actually reaching your pages in the first place.

This is a problem most teams discover too late. AI crawlers don't trigger Google Analytics. They visit your pages, make decisions about whether to cite them, and leave without any trace in your standard reports.

According to Promptwatch's AI Search report, 1 in 4 websites now receives daily visits from AI crawlers. Most of those visits never appear in any dashboard.

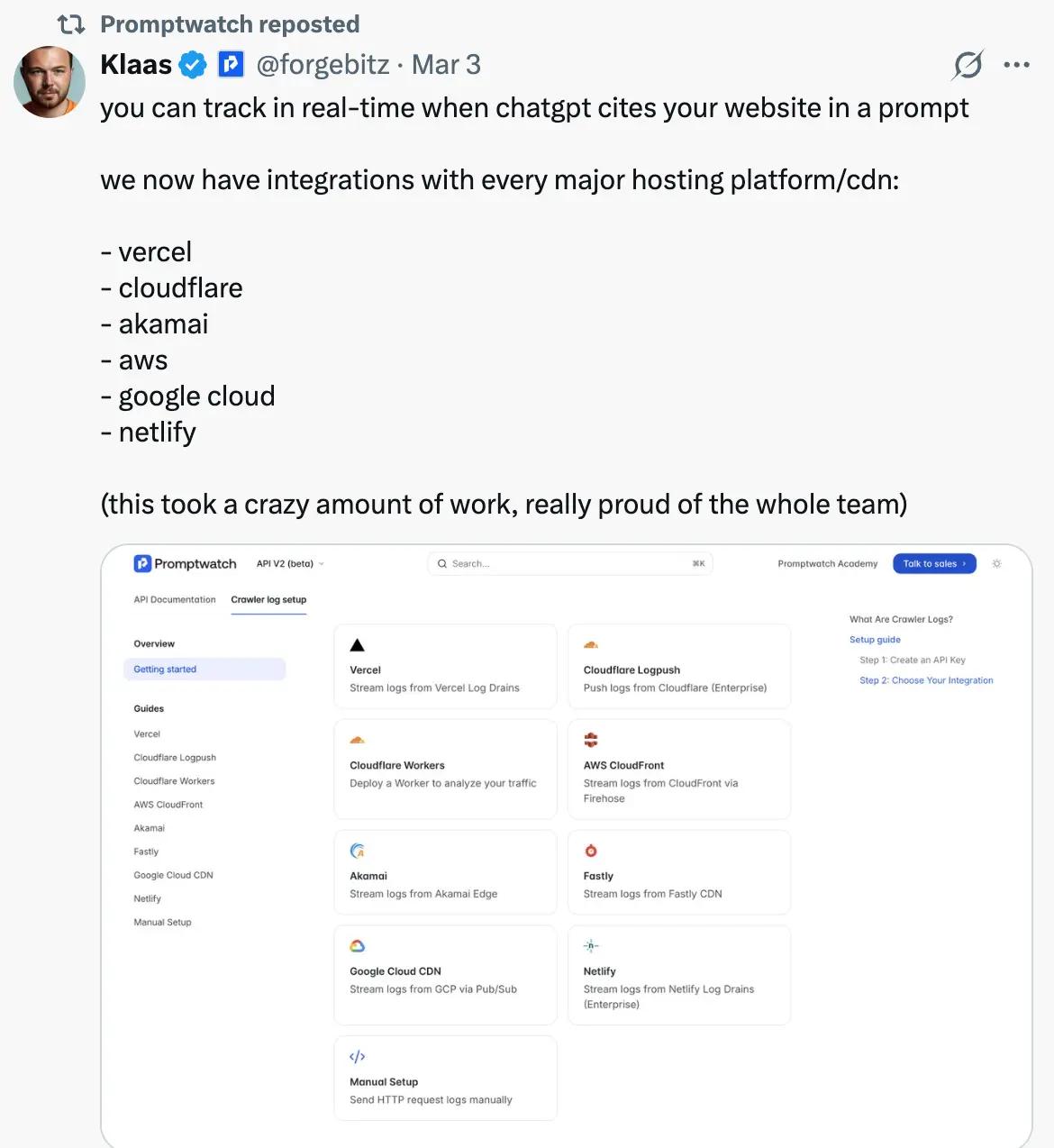

On top of that, CDN security settings (Cloudflare, Vercel, AWS) can silently block AI crawlers before they ever reach your server. If that's happening, no content strategy will fix your citation gap. Solving this required building something most GEO tools haven't bothered with: a direct integration at the network level, before requests even reach your server.

Promptwatch connects directly with Cloudflare, Vercel, Akamai, AWS, Google Cloud, and Netlify to capture every AI crawler visit including the ones that were blocked or filtered out entirely.

The result is a complete picture of which AI systems are visiting your site, how often, and whether that activity is translating into actual citations.

If crawlers are visiting a page consistently, but it's not appearing in AI answers, the issue is usually the content, not the access. AI got in, read the page, and didn't find what it needed. For the full technical setup, our guide on how to see which AI bots are crawling your site walks through the process.

Content Gap Recommendations: Know Exactly What to Build Next

By this point, you have a complete picture of your AI visibility. You know which prompts mention your brand and which don't.

The next question is: what do you build to close those gaps?

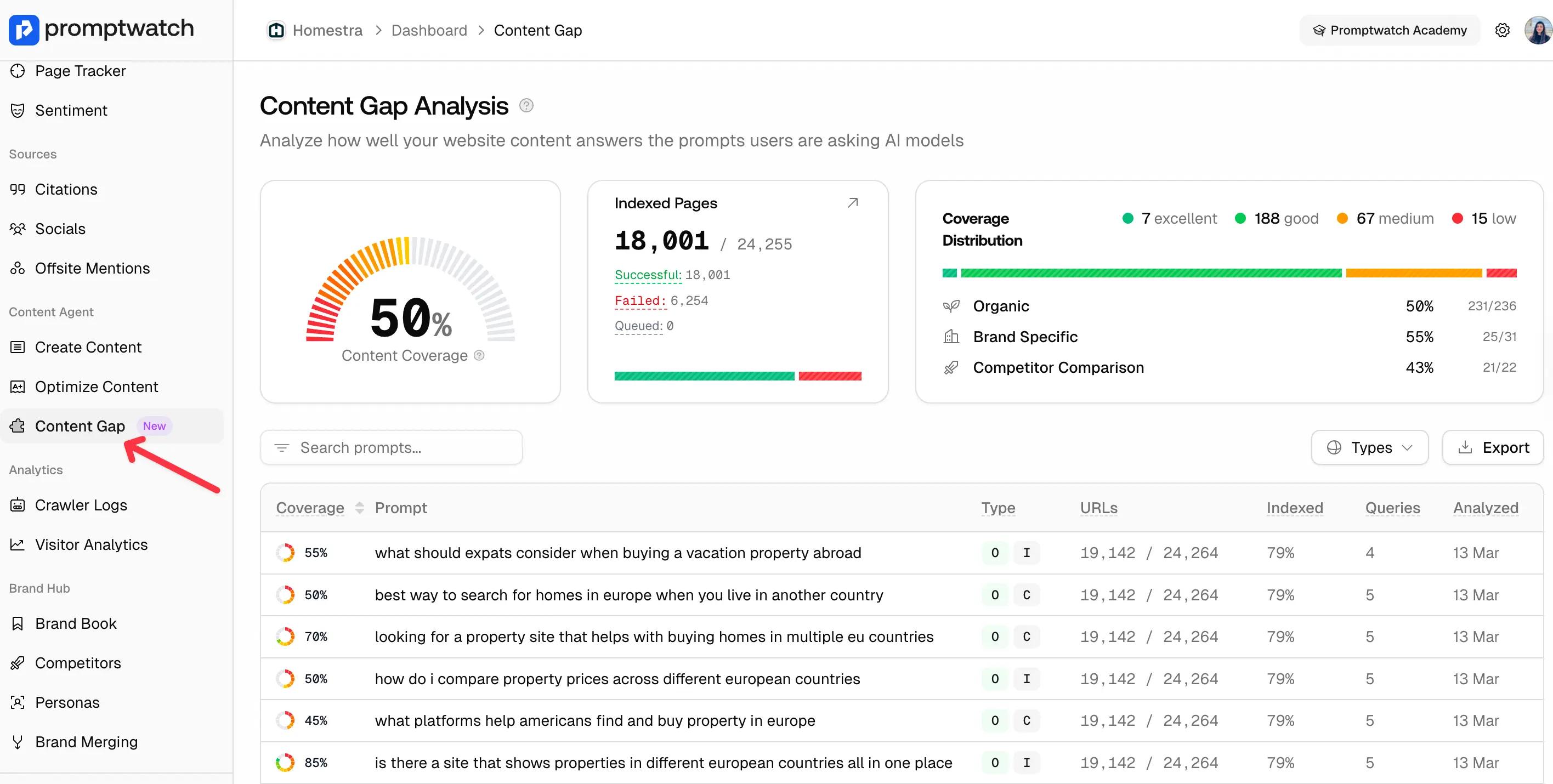

Content Gap Analysis answers that directly. It analyses how well your existing content covers the prompts your buyers are asking AI, showing you coverage scores by prompt type, which pages are indexed and which are failing, and where your content is strong versus where it's leaving opportunities open.

In this example, organic prompt coverage sits at 50% and competitor comparison coverage at 43%, meaning for nearly half the prompts where buyers are comparing options in this category, the content isn't there to support a citation.

From there, Promptwatch's Create Content and Optimize Content features let you act on what the analysis surfaces, generating new content built around the citation gaps, or optimizing existing pages so AI systems can actually extract and use them.

Here’s a quick walkthrough of Promptwatch’s content workflow.

Start Tracking AI visibility with Promptwatch

AI visibility isn't a future investment. It's a present one.

The citation patterns are already forming, the gaps are already widening, and the brands showing up consistently in AI answers today aren't waiting to see how this develops.

The only thing left is to see where you stand. Try Promptwatch free for 7 days.